Senate Hearing Created a Clash With Google Over the Definition of ‘Persuasive’ Technology

WASHINGTON, June 27, 2019 — A Tuesday Senate Commerce Subcommittee hearing, on “Optimizing for Engagement: Understanding the Use of Persuasive Technology on Internet Platforms,” became an open invitation for senators to attack the business model of the technology industry. At the hearing, Google con

Em McPhie

WASHINGTON, June 27, 2019 — A Tuesday Senate Commerce Subcommittee hearing, on “Optimizing for Engagement: Understanding the Use of Persuasive Technology on Internet Platforms,” became an open invitation for senators to attack the business model of the technology industry.

At the hearing, Google confronted bipartisan skepticism about its claimed neutrality, and about its power as a company. (See our story, “Bipartisan Group of Senators Stoke Fears About Google’s Neutrality and Influence in 2020 Election.”)

Other witnesses and senators piled on, particularly when the Google witness claimed that the search engine giant does not use “persuasive” technologies.

Instead, said Maggie Stanphill, Google’s user experience director, Google’s products are built with “privacy, security, and control for the user” in an effort to build a “lifelong relationship.”

“I don’t know what any of that meant,” replied Ranking Member Brian Schatz, D-Hawaii.

Sen. Richard Blumenthal, D-Conn., also found Stanphill’s assertion “difficult to believe.”

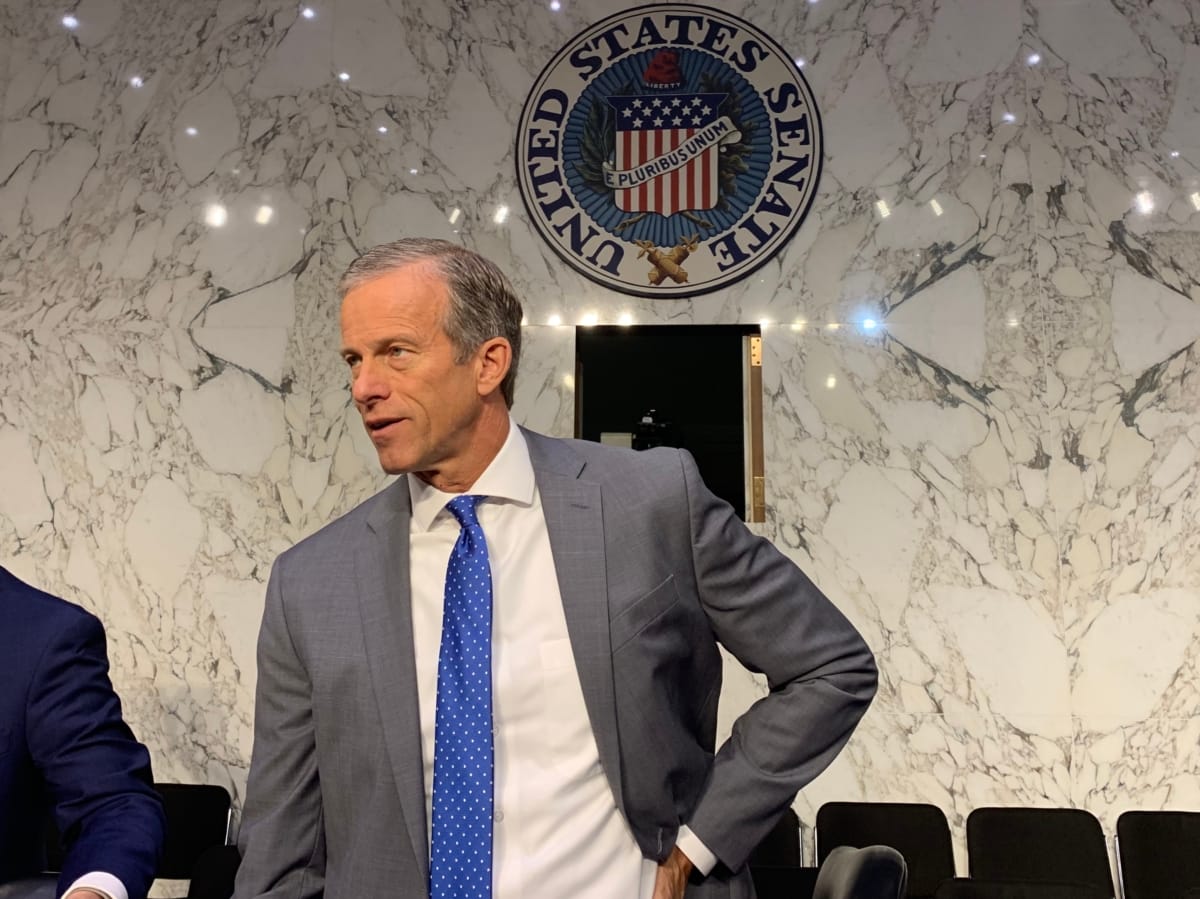

Subcommittee Chairman John Thune, R-S.D., took a darker and more conspiratorial tact: “The powerful mechanisms behind these platforms meant to enhance engagement also have the ability, or at least the potential, to influence the thoughts and behaviors of literally billions of people.”

Thune said that “the use of artificial intelligence and algorithms to optimize engagement can have an unintended and possibly even dangerous downside.”

Using the politically loaded term of ‘persuasive’ technology

Part of the disconnect may be the introduction – in the title of the event – of the politically loaded term “persuasive” technology.

Companies such as Google have a significant business incentive to take as narrow a view as possible of that term, suggested Rashida Richardson, directory of policy research at the AI Now Institute.

Center for Humane Technology Executive Director Tristan Harris argued that, in fact, “persuasive technology is everywhere.”

Social media platforms are carefully designed to be addictive because the business model is reliant on maintaining user engagement, he said. Twitter’s “pull to refresh” has the same addictive qualities of a slot machine, while Instagram’s infinitely scrolling feed gives users no signal of when to stop.

Polarization and the so-called “callout culture” are a direct result of the focus on keeping users’ attention, because moral outrage and succinct statements—in place of logic-based, nuanced arguments—lead to the highest levels of engagement.

However, there’s no easy way to address these issues because the fundamental problem is the business model itself, said Harris.

The power and reach of artificial intelligence algorithms is far more extensive than many people realize. Harris highlighted research showing that AI can predict an individual’s personality traits based on mouse movements and click patterns alone with 80 percent accuracy.

Platforms are using artificial intelligence and machine learning to build increasingly detailed and accurate models of behavior; for example, YouTube uses this to promote the autoplay content that is most likely to keep users watching.

Not only do the platforms make their media as addictive as possible, they actively make it difficult for users to leave. When Facebook users attempt to delete their accounts, the platform shows them the profiles of five users who will supposedly miss them, carefully selected based on past engagement, said Harris.

All of these tactics create what Harris called an “asymmetry of power,” meaning that users believe that they have control when they actually don’t.

Artificial intelligence is having a significant impact on society as well as on individuals. Many companies have attempted to use algorithms to determine who should be hired, released on bail, given loans, and more, oftentimes leading to highly biased and flawed outcomes. These algorithms are primarily developed and deployed by just a few powerful companies, giving them dangerously immense power to shape society, said Richardson.

Harris agreed, comparing human use of these immensely powerful technologies is comparable to “chimpanzees with nukes.”

Senators raise concern about algorithm’s impact on children

Multiple senators expressed especial concern over the impacts of these algorithms on children. Children can inadvertently stumble on extremist material by being drawn to shocking content or using search terms that carry an unknown subtext, said Sen. Tom Udall, D-NM. This can spiral into radicalization.

Harris cited various examples of this phenomenon, such as a video explaining a diet being followed by portrayals of anorexia, or a video about the moon landing being followed by flat earth conspiracy theories.

Not only is this content accidentally found, YouTube may actually be “systemically” serving it to children, said Sen. Ed Markey, D-Mass., who is planning to introduce the “Kids Internet and Safety Act” to stop autoplay and other forms of commercialization that may be targeting children.

Stanphill was adamant in stating that Google had already taken steps to fix the problems under discussion. Her claims were met with skepticism from both senators and other witnesses.

(Photo of Sen. John Thune at the hearing on Tuesday by Emily McPhie.)

Member discussion