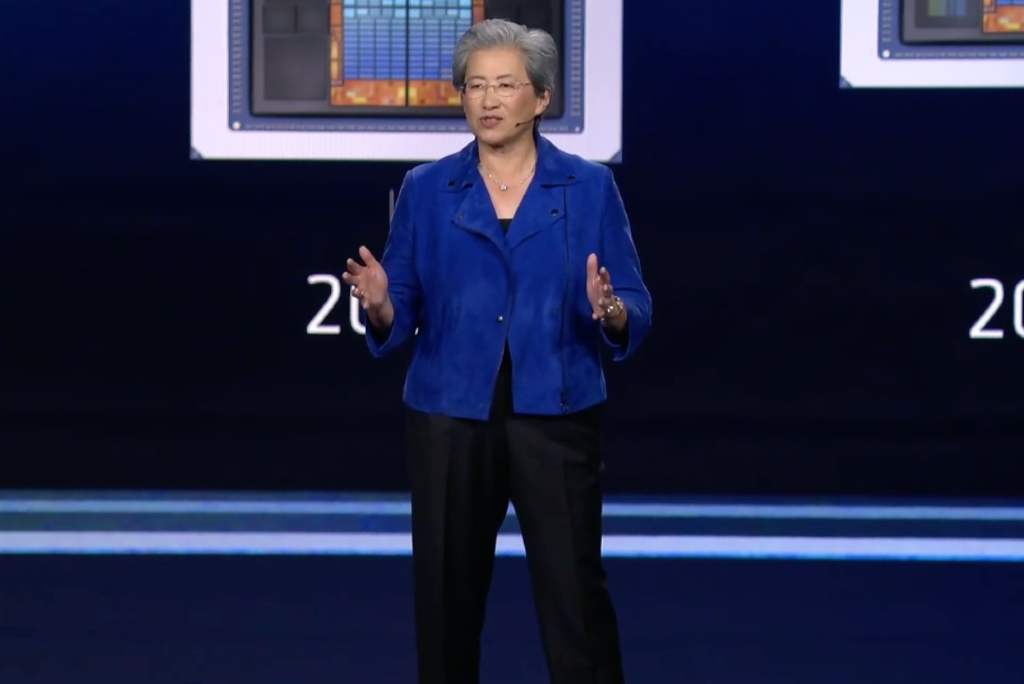

AMD Showcases Growing AI Hardware Arsenal at CES2026

Lisa Su says AMD chips power nonstop AI with OpenAI, Microsoft, and additional partnerships.

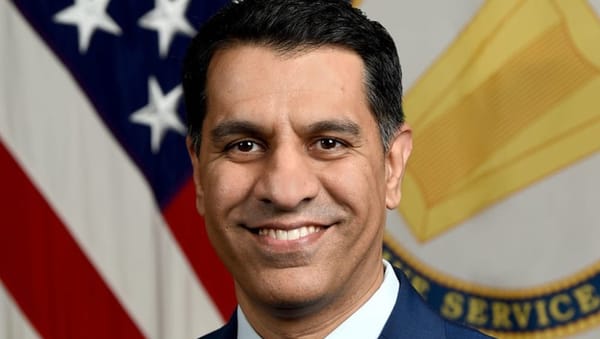

Akul Saxena

LAS VEGAS, Jan. 6, 2026 — Advanced Micro Devices CEO Lisa Su showcased a new generation of artificial-intelligence chips and large-scale computing systems at her Consumer Electronics Show kickoff keynote on Monday evening. Su said that the Silicon Valley-based chipmaker is positioning itself to meet soaring demand from companies building AI models that reason, plan and operate continuously.

Moving beyond the experimental and is becoming core infrastructure, AI is forcing a redesign of how computers are built, connected and powered, she said.

AI is used by an estimated 1 billion people worldwide, with adoption projected to reach about 5 billion as the technology becomes embedded in work, health care, transportation and consumer devices, Su said.

That growth has driven a historic surge in computing demand, pushing global AI computing capacity from roughly one zettaflop in 2022 to more than 100 zettaflops today, she said. A zettaflop equals one sextillion calculations per second, yet available capacity still falls well short of what advanced AI systems now require.

To address that gap, AMD introduced Helios, a rack-scale system designed for data centers. Rack-scale computing allows entire racks of servers to operate as a single machine, enabling AI models to run across thousands of processors at once.

Helios is built around AMD’s Instinct MI455, a specialized AI chip designed to train and run large models, alongside their EPYC central processors and high-speed networking that moves data rapidly between chips with minimal delay.

AI running continuously now

AMD said the system reflects how AI is now used in practice: running continuously rather than switching on only during training.

Partnerships were central to AMD’s pitch. OpenAI President and co-founder Greg Brockman said AI models are shifting from answering single questions to acting as agents that work through complex tasks over minutes, hours or longer.

Brockman said OpenAI is using AMD systems to support growing enterprise demand, including AI agents that write software, analyze data and assist scientific research. He said future AI systems could act as persistent digital assistants, completing tasks autonomously while users focus elsewhere.

AMD also highlighted partnerships with companies applying AI beyond text. Luma AI said it uses AMD hardware to generate and edit video, a workload that requires processing tens of thousands of data “tokens” per second, far more than typical large language models.

Beyond data centers, AMD introduced new Ryzen AI processors for personal computers, designed to run AI models directly on devices. Running AI locally can reduce delays, lower costs and keep sensitive data on the user’s machine rather than in the cloud.

Su said the announcements reflect a broader shift away from gadget-driven tech cycles toward long-term infrastructure investment.

“Helios and our new AI processors reflect how customers are deploying AI in the real world,” Su said. “This is about delivering systems that can run at scale, all the time.”

The competition is no longer just about designing faster chips but about controlling entire AI stacks, from processors and networking to software and data-center architecture. AMD aims to position itself to compete with Nvidia by selling integrated, rack-scale platforms and open tools as demand shifts from individual components to full AI infrastructure.

Member discussion