NVIDIA Unveils New AI Systems as Computing Demand Surges

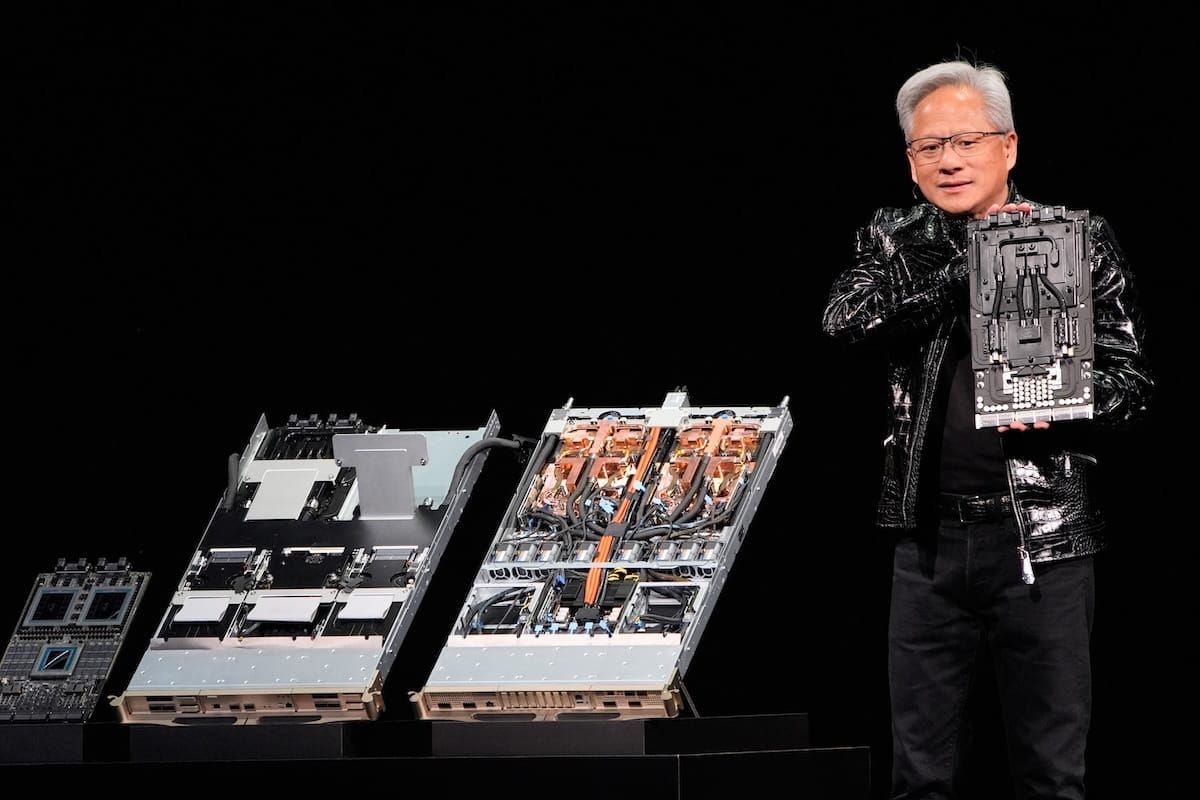

CEO Jensen Huang said its latest architecture integrates chips, networking and cooling to support next-generation AI workloads.

Akul Saxena

LAS VEGAS, Jan. 5, 2026 — On Monday Nvidia unveiled wide-ranging artificial intelligence tech and computing systems designed aimed at training robots, autonomous vehicles, and next-generation models.

Broadband BreakfastBroadband Breakfast

Broadband BreakfastBroadband Breakfast

Jensen Huang, CEO of the graphic processing unit manufacturer, the world’s largest company by market capitalization, said AI has entered a phase reshaping software, hardware, and machines in the physical world.

Speaking at a press event at the Las Vegas Convention Center ahead of the Consumer Electronics Show here, Huang said AI was no longer an added layer on top of traditional software: Modern applications now rely on AI models that interpret context and generate outputs dynamically, rather than executing fixed, prewritten instructions.

That shift, Huang said, helps explain the sharp rise in demand for computing power, which he estimated to be roughly $10 trillion in the past decade. Unlike earlier technology cycles driven largely by research or consumer adoption, Huang said the current surge is being fueled by companies and governments modernizing core systems they already depend on.

Why this moment is different

Huang said a turning point is occurring with the rise of reasoning models that devote additional time and computing power to working through problems, a change that has multiplied demand for advanced chips and high-speed networking.

Huang additionally focused on physical AI, or systems designed to function in the real world. While today’s AI models excel at language, images and speech, he said they still lack basic physical understanding, such as gravity, inertia and cause and effect. That limitation has slowed progress in robotics and autonomous machines.

Nvidia’s response, Huang said, is large-scale simulation combined with synthetic data. He introduced Nvidia Cosmos, a platform that generates realistic virtual environments for training robots and self-driving systems. Cosmos can ingest real driving data, sensor information, and generate large numbers of new scenarios that would be dangerous or expensive to capture in the physical world.

The company also highlighted AlphaMayo, an autonomous driving system designed to reason about its actions and planned paths rather than react instinctively. Huang said the system was trained using a combination of human driving examples and synthetic data generated through Cosmos.

Hardware remained central to Nvidia’s message. Huang said improvements in chip manufacturing alone were no longer sufficient to keep pace with the growth of AI models. As a result, Nvidia has been redesigning processors, memory and networking together as integrated systems.

AI systems now run constantly, Huang said

He introduced the Vera Rubin computing platform, which combines central processors, graphics processors and networking into a single architecture optimized for AI training and continuous operation. Huang said AI systems now run constantly rather than only during training, making efficiency increasingly critical as AI spreads into vehicles, factories and consumer devices.

Networking was another emphasis. Huang said AI workloads require enormous volumes of data to move quickly between thousands of processors, making low-latency connections essential. Nvidia unveiled faster networking systems designed to handle this internal data traffic more efficiently inside large data centers.

Energy use also featured prominently. Huang said newer Nvidia systems support liquid and hot-water cooling, helping data centers reduce electricity consumption and avoid building excess capacity. While overall demand for computing continues to rise, he said efficiency gains are becoming essential.

Throughout the keynote, Huang emphasized openness and open-source, saying more advanced AI models and training tools are being shared publicly so developers and researchers can build on existing work. Nvidia, he said, has opened its training, data, and simulation stack to encourage broader experimentation.

Huang framed the announcements as evidence that AI has moved from novelty to core infrastructure, reshaping industries from manufacturing to transportation. What distinguishes the current moment, he said, is that software, hardware and physical systems are all changing at the same time.

“This is an industrial transformation,” Huang said.