Drew Gravitt: Powering the AI Era Isn’t Just an Energy Problem, It’s an Infrastructure One

As AI data center electricity demand is projected to double by 2030, the industry's biggest bottleneck is no longer compute, but energy

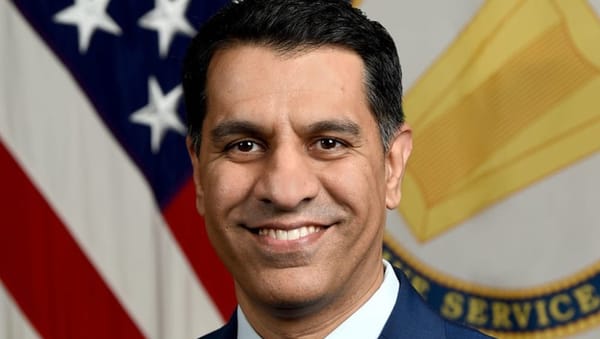

Drew Gravitt

The conversation around artificial intelligence infrastructure often starts – and ends – with electricity demand. U.S. data centers consumed 183 terawatt-hours (TWh) of electricity in 2024, representing more than 4% of the country’s total electricity consumption for that year. By 2030, this figure is projected to grow by 133% to 426 TWh. While these projections are staggering, the real challenge facing the industry in 2026 isn’t simply the aggregate volume of power AI will require.

Instead, the industry is grappling with a fundamental shift in how quickly, reliably, and responsibly power can be delivered to the site. As of February 2026, the U.S. landscape consists of 577 operating data centers with 14,187 megawatt-hours (MWh) of capacity, but the pipeline is swelling with 666 planned projects that could add 176 gigawatt-hours (GWh) of additional capacity.

This indicates that the data center constraint has moved definitively upstream, and we’re entering an era where compute is no longer the primary bottleneck. Instead, power distribution and energy management have become the defining constraints for AI data center growth.

Identifying the true bottlenecks

Bringing new AI data center capacity online is taking longer than many people expect. While generation capacity receives much of the attention, the most acute bottlenecks today lie in the connection to the grid rather than the supply of electrons.

In several regions across the country, developers are waiting years to gain approval to tap into the electric utility. This is compounded by the fact that power markets are governed locally, leading to a patchwork of inconsistent requirements around cost, timing, and compliance that turns site selection into a series of educated bets.

Additionally, large power transformer lead times are stretching between 18 and 36 months, and generator or turbine lead times are measured in years, not months. Substation capacity, not land or buildings, is also a major consideration for data center site selection and defining feasibility and schedules before contracts are ever signed with Hyperscalers or developers.

The rise of the AI factory and grid independence

This environment has given rise to a new category of digital infrastructure known as the "AI factory." Unlike traditional enterprise data centers, an AI factory is a production facility for high-value digital output, supporting the entire lifecycle from large language model (LLM) training to tuning to inference. As a result of bottlenecks and the challenges of connecting to the traditional grid, developers are increasingly pursuing a strategy of grid independence.

Roughly 30% of all planned data center capacity – 106GWh over 10 years – is now being designed as behind-the-meter prime power. These operators are deploying mobile or stationary natural gas generators, turbines, fuel cells, solar, and batteries that can deploy quickly to meet immediate time-to-power demand. In this new era of “bridge power,” capital is underwriting megawatts rather than square footage, prioritizing sites that feature a credible behind-the-meter primary power strategy paired with an eventual grid tie for resiliency and monetization of bridge power assets.

Managing volatile load profiles

The technical challenge of the AI factory also lies in its transients. AI power consumption exhibits rapid fluctuations during batch processing, with spikes such as 6 MW to 30 MW – depending on the data center size – occurring in under 300 milliseconds. These volatile transients can destabilize the frequency and voltage of a standard grid-independent system without sub-second mitigation.

To support AI-scale power volatility, operators are pairing steady generation with ultra-fast storage systems that can instantly absorb or release power to maintain stability. When generation and high C-rate batteries or flywheels for millisecond buffering are paired with software-based smoothing algorithms, peak demands can be reduced by up to 30%, minimizing strain on upstream infrastructure and extending the life of mechanical equipment.

Software that softens the load

Central to this infrastructure pivot is an advanced control system that leverages AI and machine learning for predictive intelligence. These systems allow operators to establish real-time load profiles and use digital twins to visualize how power behaves under real-world scenarios before committing infrastructure spend.

This software-driven orchestration allows data centers to act as virtual power plants, or VPPs, that can dynamically adjust loads in response to grid signals or system conditions. This includes the potential ability to dynamically pause or shift non-urgent training workloads, turning what was once a firm data center load into a flexible resource that can respond to real-time grid needs and outages.

Modular solutions, such as pre-engineered containers that unify power delivery, busway distribution, and transformers, are also emerging as a way to reduce on-site build times and construction risk while providing power-hungry racks with 100 kW+ of power.

Lessons from the field

After working across various industries, one thing becomes clear: the power decisions you make early are the ones you live with the longest. Facilities designed with flexibility in mind are far better positioned to adapt as power needs develop and regulations evolve. Breakthroughs in chips and software will matter, but they only go so far if the underlying power systems can’t keep up.

Solving the AI factory power challenge means looking beyond traditional metrics and focusing on how power infrastructure is actually planned, built, and brought online in the new AI data center world. As we look toward 2027, the winners will be those who continue to innovate to solve the time-to-power problem across the entire chain, recognizing that the future will be shaped as much by the physical electron path as by the GPUs and algorithms it powers.

Drew Gravitt is Senior Director of Distributed Generation & Microgrid Sales at Mission Critical Group (MCG), a leading provider of critical power infrastructure solutions headquartered in McKinney, Texas. Gravitt brings deep expertise in distributed energy, building automation, and cloud-based software applications, with a passion for sustainability and tailored energy management solutions. Prior to joining MCG, he served as Director of Strategic Partnerships at Schneider Electric, where he spent over a decade focused on Energy-as-a-Service and helping consumers and communities improve energy resilience and reduce utility costs through microgrids and innovative financing structures. This Expert Opinion is exclusive to Broadband Breakfast.

Broadband Breakfast accepts commentary from informed observers of the broadband scene. Please send pieces to commentary@breakfast.media. The views expressed in Expert Opinion pieces do not necessarily reflect the views of Broadband Breakfast and Breakfast Media LLC.

Member discussion